AI

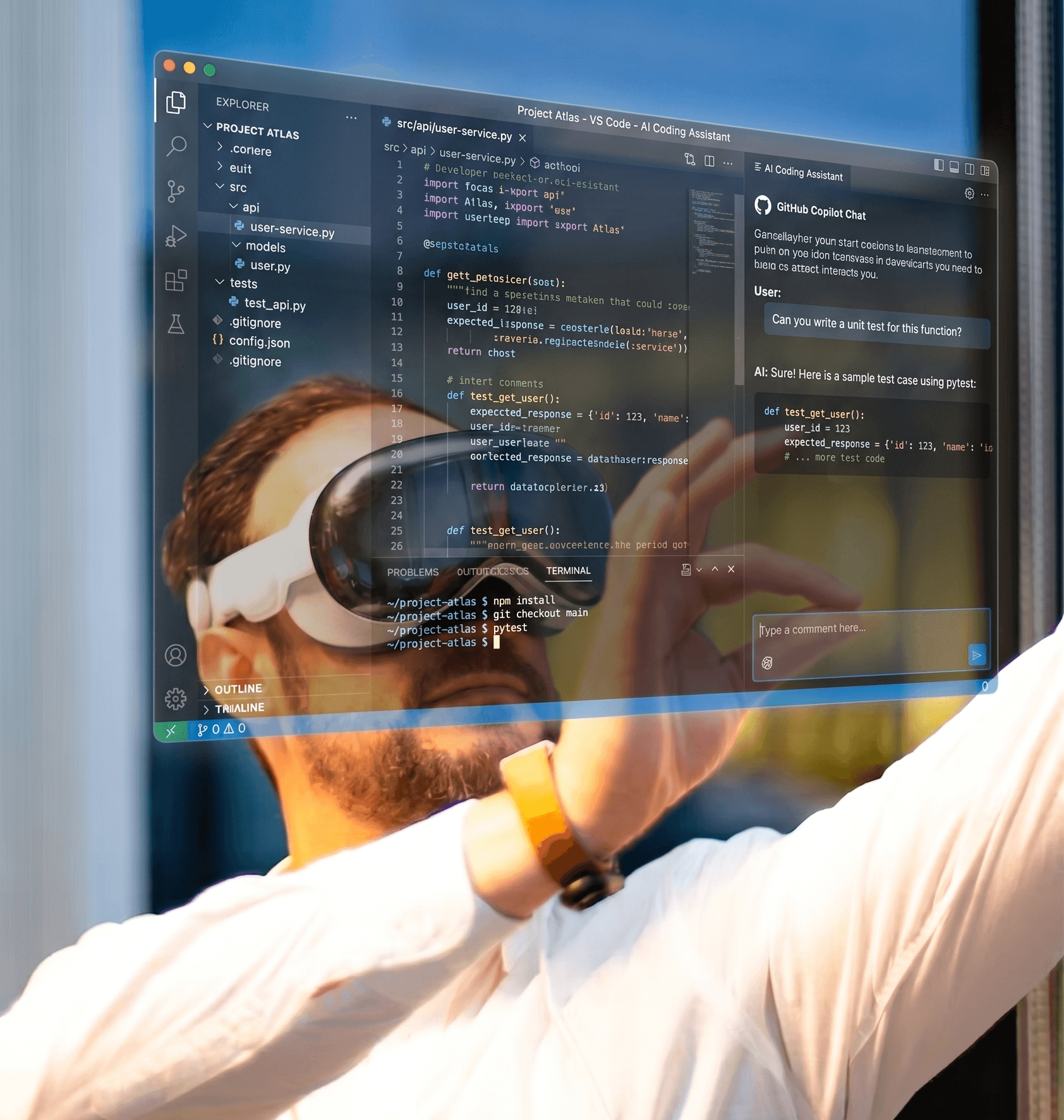

How Software Engineering could change in the next 5 years

Dominic Willson

Co-Founder & Chief Executive Officer

14 May 2026

From inside an AI-first engineering team, here are our predictions for what software engineering really looks like in 2031, and why "Chief Problem Solver" might be the better job title.

Software Engineering in 2031

You’ll probably have noticed the strange pattern in how the tech industry talks about AI and software engineering right now. On one hand, in 2024 we had Sundar Pichai saying around 25% of new code at Google is AI-generated, with reports suggesting that’s now closer to 75% in 2026. Dario Amodei at Anthropic has predicted AI will write essentially all code within twelve months. Marc Benioff is openly debating whether Salesforce hires any engineers at all this year (which builds on a bold statement the year prior).

On the other hand, METR, a serious research outfit, ran a controlled study in 2025 with experienced open-source developers using Cursor and Claude on real codebases. The developers predicted they'd be 24% faster with AI. They believed afterwards they'd been 20% faster. They were actually 19% slower.

Both things are true. They're now just describing two different jobs that used to share a name. I’m starting to think "Software Engineer" is giving off the wrong impression and we should all be striving to be Chief Problem Solvers.

That's the real story of the next five years. Not the "death of the coder" headlines, which get clicks but completely miss what's happening. The job is splitting. Some bits are being commodified into oblivion. Other bits are getting more valuable than ever.

I’ve been writing code in some form or another for over 25 years (urgh) and I still do, for now, and it has changed more in the last 6 months than the 24 years before it. I’m inclined to agree with Dario.

Enough about the now.

Here are some predictions for what software engineering could look like over the next five years, from inside a team that constantly tries to be ahead of the curve (and, honestly, can't help itself when shiny new tech is involved).

0. Opening the taps on innovation

We are already seeing this one happening every day and by 2031 it’ll be second nature to most organisations.

There’s already a huge shift driven by the cost floor and the risk profile of bespoke software collapsing dramatically at the same time. If you aren’t copiloting, you’re already getting left behind.

That opens the door to a whole tier of innovative organisations who were previously priced out, or quite reasonably nervous about investing seriously in their own technology. The company that always knew their operations could be transformed by software but couldn't justify a seven-figure multi-year build. The local authority whose digital team has been firefighting legacy for a decade. The charity, the family business, the low-margin retail. They can now plausibly invest in software that genuinely fits their problem, at a fraction of the cost. That's what we really mean by innovation.

I think we’ll see more large behemoth organisations fail and be replaced by innovative fast movers who are embracing change.

Is there a Microsoft in 2031, or is it just a failing company OpenAI bought for some patents or its data centres?

1. The job becomes a hybrid: BA, product engineer, solutions architect and software engineer in one person

The clearest shift is that the boundaries between these roles are dissolving, and the same thing is happening inside engineering. Backend, frontend, API specialist: those tidy little kingdoms we’ve magic’d up over the last few years are gone in 2031.

The full-stack architect wins. Or perhaps just Chief Problem Solver.

The work didn't disappear, it just stopped being the bit that creates the value.

The day-to-day looks less like "implement this ticket" and more like: sit with a warehouse manager for an hour, understand what's actually slowing them down, design the solution, prototype it that afternoon, ship a working version the next morning.

The skills that matter are problem framing, domain understanding and judgement about what not to build. Because you can now build some things 100x faster than you ever could, it doesn’t mean you should. Just keep a human in the loop (for now).

Greenfield is the easy bit. The harder, more valuable territory is the fifteen-year-old codebase with implicit knowledge baked into every weird abstraction. AI tools are getting better here, but humans are a must for the complex context bridging. That's exactly the territory of the local authorities and regional manufacturers I mentioned above, and it's where Chief Problem Solvers earn their keep.

Andrej Karpathy calls this "agentic engineering": you're not writing code 99% of the time, you're orchestrating agents who do. Kent Beck, asked recently what AI changed for him after 50 years of programming, said "90% of my skills went to zero dollars and 10% of my skills went 1000x." The catch: that 10% only stays sharp if you use it. If 90% of your day is orchestrating agents, fundamentals are at risk of atrophy or never being learned in the first place. Review those PRs, kids.

We've got a separate post coming on what all of this means for graduates and apprentices, because the picture is more nuanced than "AI killed entry-level coding". Short version: the junior-to-senior career ladder is no longer "be the best at writing efficient code". It's being the best at understanding messy human problems and turning them into something a machine can build. Which leads me to…

2. Non-tech backgrounds become an advantage

This is the prediction I'm most confident about, and most excited by.

Sam, my co-founder, is a linguist and the perfect embodiment of what I mean here. He's a product guru, he gets people, and he can translate human and system need into work to be done faster than anyone and certainly any AI I've ever met. Between us, we've trained hundreds of non-STEM graduates and apprentices who have become some of the most talented Chief Problem Solvers I've ever worked with.

If the differentiating skills are problem framing, communication, domain knowledge and translating between humans and systems, those don't need a CS degree as a prerequisite. They need curiosity, empathy and the ability to learn fast, and probably a bit of a tech nerd.

A lawyer who retrains at 35 has spent a decade understanding how people think under pressure. A nurse who pivots into HealthTech knows more about clinical workflows than any engineer ever could. A warehouse operator understands every hack they implemented to do the job quicker than any SaaS developer could hope to.

A Harvard/BCG study from late 2023 found AI gave the bottom half of consultants a 43% performance lift, against 17% for the top half. Brynjolfsson's customer support work showed a 34% lift for bottom-quartile workers offset by a 14% average across all works and roughly zero for the top quartile. The direction is clear. AI is a great leveller for people with the right disposition, regardless of where they started.

By 2031 I expect to see consultancies, product teams and engineering departments where 50% of senior practitioners came from non-traditional backgrounds. They'll often be the most effective people in the room.

The "20+ years in tech" prerequisite for being a brilliant solutions architect is dissolving in front of us, and I say that as a 20+ years in tech solutions architect.

3. Whole agentic pipelines that humans don't touch

Today, "AI in production" usually means a human in the loop somewhere: reviewing PRs, approving deployments, checking outputs. Or at least it really should. By 2031 that won't be true for entire categories of software.

We'll be building and operating production agent pipelines that humans don't touch for weeks at a time, maybe ever. Customer onboarding, compliance monitoring, data reconciliation, invoice processing, support triage, even whole product features. They'll self-monitor, self-test, raise their own bugs, write their own fixes, and only kick to a human when something's genuinely outside their training parameters.

The supporting evidence is on the curve. METR's research shows the length of tasks an AI can do reliably has been doubling every seven months for six years. As of early 2026, Claude Opus 4.6 can complete 14-and-a-half-hour coding tasks at 50% reliability. Project that forward 5 years and you're at multi-day, multi-step autonomous work no human can compete with.

It's not the agents-do-everything fantasy. There's a long tail of edge cases and reliability problems. I think the unit of human attention shifts from "the line of code" to "the running pipeline".

Verification is also where the legal questions land. When a self-healing pipeline patches itself into a regulatory breach, or ships code with someone else's IP baked in, who's accountable? Today there's no clean answer.

The hard bit isn't the building. The hard bit is the verification, oversight and accountability architecture around it. Which leads neatly to:

4. Self-healing security and automated AI defences become non-negotiable

This is the one that terrifies me most.

The offensive side of cybersecurity is already automated, AI-driven and operating at machine pace and scale. The defensive side has to match it. There is no version of the future where humans manually triage threats from automated attackers running thousands of simultaneous probes. We’re seeing supply chain attacks increase at a scary rate.

By 2031, every business of meaningful size will need self-healing security: systems that detect anomalies, isolate compromised components, patch themselves and learn from incidents without waiting for a human to read the alert. Automated AI defence isn't a nice-to-have. It's the table-stakes minimum to operate. For engineers, shift-left becomes truly non-negotiable. Every prototype, every PR, every deployment runs through automated security analysis the same way it runs through linting and tests today.

5. Perfect is the enemy of progress

There's a side to all of this we feel acutely from our defence and public-sector work.

The EU AI Act and the growing pile of sector-specific rules in healthcare and finance will shape who can deploy what, and how fast. Done well, that's protective. Done badly, it leaves regulated defenders stuck in treacle while adversaries, who couldn't care less about compliance, automate freely.

Enemies are already getting dangerous with automated attacks. The asymmetry between "what a hostile actor can ship tonight" and "what a regulated defender can ship this year" is going to be one of the most important and uncomfortable stories of the next five years. And it ties straight back to point 3, the same regulatory weight that slows defenders down also decides whether autonomous pipelines are allowed to operate at all.

This is also where a genuine new specialisation appears: AI-aware security engineers who understand how attackers use AI, how defenders need to use AI, and how the two co-evolve. A niche that barely exists as a career path today, and one of the most in-demand roles by 2031.

6. Rapid prototyping with real, shippable products becomes standard

Prototyping stops being a wireframe-and-mockup activity and becomes building actual, deployable products in parallel with the main roadmap.

A partner of ours was in our office recently, describing a frustrating challenge he knew should be fixable. The kind of thing you can already pay tens of millions to a large organisation for their do-it-all solution. That night I went home, vibe coded for 30 minutes, and brought a prototype in the next day. He said it needed a few changes, but was pretty much spot on. Shipping it would be insane, but without AI prototyping tools we'd never have validated the idea, or had the conversation about what "spot on" actually means.

Product teams can already run 5 to 10 small prototypes in parallel for every feature that ships. Most will be discarded, some will graduate, some will become products in their own right.

For engineers, that means comfort with ambiguity, comfort with throwing work away, and comfort with making a hundred small judgement calls a week. Prototype quality, MVP quality and production quality become three distinct disciplines, and we can't treat prototypes like pets.

We all become a little less scared of failure. That's only a good thing.

7. The unit of leverage changes: one engineer, many parallel tasks

The expectation is no longer "one engineer, one task". It's one engineer running 3 to 5 parallel tasks, each supported by its own agent pipeline and tooling.

You can already see the early version. Cursor hit roughly $2bn ARR with around 50 staff in early 2026. That's roughly $40m of revenue per employee, against a SaaS median around $125,000. Midjourney hit $200m ARR with 11 people. The "tiny team, huge output" pattern isn't a fluke.

I’m sure you can imagine this won’t be good for inequality and the wider economy.

I firmly believe the software engineering team doesn't shrink, it pivots. There's still as much demand for human judgement. There's just much more value created per hour of it. And as the value created per hour climbs, time-and-materials starts to feel like a strange way to charge for it. Value-based pricing, what's it worth to have this problem solved, becomes the more honest conversation.

We can already charge customers significantly less per hour for the same output we delivered two years ago. The same project gets delivered faster, with more options explored and more headroom for the unexpected. Our customers scale faster, recommend us to their friends, and more businesses scale as a result. We're busier than we've ever been, working with more customers than ever, and we don't see that changing. We're just working with them differently, as Chief Problem Solvers rather than pure coders.

So what do you do about it?

Five years isn't long. The interesting bit isn't whether any single prediction holds exactly as stated. I'm sure things I haven't thought of will dominate by 2031 in ways that make this whole post look ridiculous.

If you're an engineer, the most leveraged thing you can do this year is get fluent at orchestrating AI on real problems, and stay curious, nerds.

If you're from a non-tech career path but you're excited by the idea of being a Chief Problem Solver, the door is more open than it's ever been. Send us a message!

If you're running a business, the half-life of "we'll figure out AI next year" is measured in months. The firms still saying it in 2027 will be acquisition targets, not competitors.

We don't claim to have any of this fully figured out. We're an AI-first engineering team, moving and growing faster than we ever have, and still genuinely learning. Most importantly, we get to build cool stuff for more cool people than we ever have. How can that be a bad thing?

If you're reading this from 2031, hop back in time and let me know how I did.